Technical Debt: What It Is, Types, and How to Manage It

Anyone working in software, especially those on the ground floor building it, has likely heard the term "technical debt" thrown around frequently. Even without knowing much about its definition, most can agree that it is inherently a bad quality in your codebase. But, just like financial debt, not all debt is bad when chosen strategically. However, rampant, unintentional technical debt is generally not a good thing either. In this post, we will go over what technical debt is, when it's good and bad, and touch on some of the ways teams can work towards managing it.

What Is Technical Debt?

Technical debt (also called tech debt) is the implied cost of future work that arises when software development teams choose a quick or incomplete solution over a cleaner, better-designed approach. Like financial debt, it accrues interest over time, making future changes more expensive, slower, and riskier. The longer you ignore it, the more it compounds. Most teams don't have a debt problem at the start of a project. They earn it by making trade-offs that felt right at the time but left obligations behind.

The term technical debt was coined by Ward Cunningham in 1992. His debt metaphor compared the cost of shipping imperfect code to the cost of borrowing money. You get immediate benefit, but you'll pay it back later with interest. That interest manifests as slower feature development, higher bug rates, and engineering teams burning out while trying to work around the accumulated mess.

The Financial Debt Metaphor

Think of it this way: you can rush a database migration and cut corners on testing. You ship faster. But you've just taken out a loan. You'll be paying interest every time that sloppy migration causes a system failure in production. Every time a new developer wastes a week understanding why the schema is inconsistent. Every time you can't scale because the migration was never designed for it.

The problem isn't that you borrowed. It's that many teams borrow without ever paying back. They treat debt as a permanent feature of the codebase instead of a problem to solve. That's when technical debt stops being a trade-off and becomes a crisis, with long-term consequences that affect every development cycle and make it exceedingly hard to innovate.

Technical Debt vs. Bad Code

Not all bad code is debt. A junior engineer who doesn't yet know how to write code well is a learning problem, not a debt problem. Technical debt refers specifically to decisions made with full knowledge of the trade-off. You knew the shortcut had consequences and took it anyway because the alternative was too expensive in development time or required more resources than you had.

Bad code you didn't mean to write isn't debt. It's just bad code (for the most part). Debt is the tax you pay for having made a conscious choice, usually under tight deadlines.

Types of Technical Debt

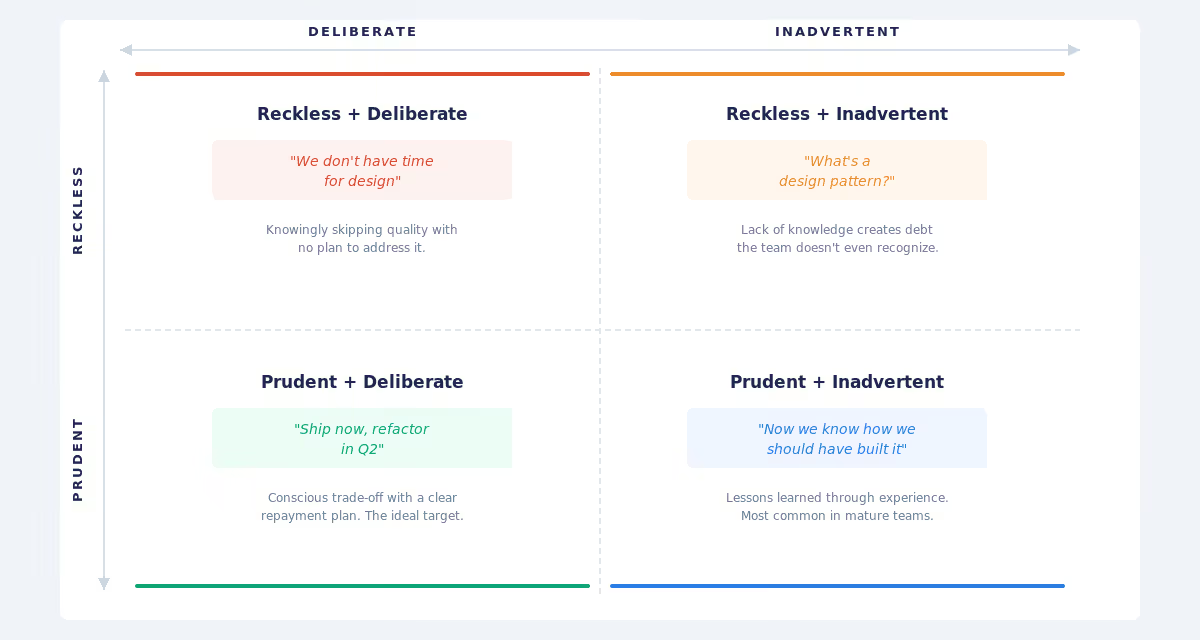

Technical debt shows up everywhere in your system and can go well beyond just your code. Martin Fowler's Technical Debt Quadrant gives us a useful framework for categorizing where these trade-offs live.

Deliberate vs. Inadvertent Debt

Some debt is intentional technical debt. Your team decided to ship a hardcoded configuration instead of building a full feature flag system because you were two weeks from a launch deadline. That's deliberate debt. You knew what you were doing, and you accepted the future costs.

Other debt creeps in as unintentional technical debt. A developer added a workaround for a performance issue that no one ever documented. Three years later, nobody dares touch it. That's inadvertent debt. It's harder to manage because development teams don't even know it exists.

Deliberate, reckless debt is the most dangerous. Inadvertent prudent debt is the most common in mature teams. Both need different treatment strategies, and technical debt accumulates fastest when teams can't distinguish between them.

Architectural Debt

Architecture debt is the heaviest kind. You made a fundamental choice about how your system is organized, and now that choice is constraining you.

A monolith that can't be broken into services is architectural debt. Tightly coupled components in an authentication layer that you now need to replace. A database schema that conflates two business concepts so thoroughly that splitting them would require rewiring half your system. These decisions ripple through your entire organization, creating maintenance challenges for years to come.

The choices that lead to this type of debt are pretty common. Let’s say you chose microservices and now you're drowning in increased complexity. You built a custom framework that worked well until it became unmaintainable legacy code. You picked a database that solved yesterday's problem, but can't scale to tomorrow's. These issues lie deep in the architecture of the service or application, vs simply going in and doing a quick code refactor.

One way to spot these issues is through live architecture visibility, which helps catch them early, before they calcify across the entire system. Being able to see your full technology estate with 1000+ component visibility lets you spot the decisions that are beginning to constrain you, not after three years of pain.

Code Debt

Messy functions. Code complexity that spirals out of control. Redundant code and duplicated algorithms. Missing documentation. Lack of tests. This is the most visible kind of debt and the kind most teams focus on fixing.

Code debt slows you down locally, on individual stories, and on new features. But it's usually the smallest of your debt problems. If all your debt is code-level, you're in decent shape. You can refactor existing code. You can add tests. You can improve long-term code quality. All of these fixes are significantly easier than issues that lie deeper in the architecture (as mentioned above).

Infrastructure and DevOps Debt

Your deployment pipeline is a series of manual scripts that only one person understands. Your monitoring setup is a patchwork of tools that don't talk to each other. Your infrastructure is provisioned by hand with tweaks accumulated over two years. This is process debt.

This debt accumulates fast. It makes rolling back changes harder. It makes scaling risky. It burns your ops team. And it's usually invisible to the developers writing code.

In real life, this might look like this: A team discovers they're running 47 different versions of Node.js across their infrastructure. Not by design but by accident, over five years. Now they can't upgrade npm packages without coordinating across 47 different deployments.

Security Debt

You implemented a homegrown authentication system instead of using a standard. You never rotated secrets. Your API has known security vulnerabilities, but fixing them would break your largest customer's integration.

Security debt is unique because the interest compounds invisibly. You don't see the cost until you're breached. By then, it's too late. Architectural debt often lurks here, too, where poor architectural choices lead to poor security, which then means you have a hard-to-fix security problem since the underlying technical debt makes every code change risky.

Common Causes of Technical Debt

One thing about technical debt is that how it comes to be can usually be traced back to some extremely common causes. Most of these are pretty easy to follow cause-and-effect patterns, which means that understanding what factors create these types of debt can be a good way to manage and reduce them in the first place. Let’s look at a few of the core ones below:

Time Pressure

This is by far the most honest cause. Developers and architects get pressed for time through sprint deadlines, quarterly business needs, and promises made to customers before the team was consulted. Time pressure forces choices, and teams working to meet deadlines will take short-term gains over long-term sustainability almost every time.

The problem is that teams rarely go back and pay it down. This isn’t intentional; they generally do want to fix it, but the inevitable always seems to happen: the quarter ends, a new deadline arrives, and the debt stays.

Lack of Architecture Visibility

You can't pay down debt you can't see. Many teams have no idea what their actual technology estate looks like. They have scattered architecture docs (if any). They rely on tribal knowledge. Nobody truly owns the architecture picture from end-to-end.

Without visibility, you inherit existing technical debt from previous teams and can't distinguish deliberate choices from mistakes. You repeat the same bad patterns because you can't see the patterns. Legacy systems grow more tangled, and the development process slows to a crawl.

Architecture visibility means having a living, up-to-date model of your system. Not a wiki that's three years out of date or tribal knowledge from a group of peers that has been working on the same system since the mid-90s. To figure out the baseline and remediate, you need an accurate representation of what's running, how it connects, and the trade-offs involved.

Misaligned Incentives

Employees, in general, like to keep their bosses happy. Meeting the grade usually comes in the form of tracked metrics that may not reflect what's actually needed to build quality software. You see this a lot: engineers are measured by velocity, not software quality, and the team is rated by feature count, not maintainability. Your company is optimized for speedy delivery, not long-term engineering health.

When the incentives don't reward paying down debt, nobody pays it down. They optimize for what they're measured on.

Missing Data-Driven Decision Making

Teams make architecture decisions in meetings based on hunches and opinions. They guess at the cost of refactoring versus building new. They don't have data on which services consume the most resources or which dependencies are most critical.

This leads to debt because you don't know the real trade-offs. You're making multi-million-dollar architecture decisions with incomplete information, and the non-strategic results of this lead to costly rework down the line.

How to Identify Technical Debt

So, when the above causes affect your technical debt levels, how do you know? Luckily, this is another piece of the puzzle that is generally pretty easy to spot. Most dev teams can spot these issues a mile away, even if they don’t realize tech debt is the underlying issue.

Code Reviews and Complexity Metrics

Pull requests that have 15 rounds of feedback. Code that nobody wants to touch. Functions with 10+ parameters. Cyclomatic complexity that's off the charts.

These are signals. They mean people are struggling to understand the code. That's debt that is accruing interest right now, in real time, as developers waste manual effort just reading your code.

Tools can measure this. Static analysis tells you where complexity is highest. But the best signal is your team's behavior: do they avoid certain files? Do they copy-paste logic instead of reusing it? That's usually a sign that the code is (or will be) hard to work with.

Developer Feedback and Pain Points

Ask your developers: "What part of our codebase makes you slowest?" or "What can't we change without fear?" You'll usually get honest answers. They know where the pain points are because they're struggling with them daily. The problem is that their feedback rarely gets translated into engineering time; the coveted “tech debt/refactor sprint” that most teams request goes unanswered. Instead, it stays as grumbling in a Slack channel.

Performance and Reliability Signals

From the operational side, you’ll see slow deployments, high incident rates, and rollbacks that take hours instead of minutes. On the runtime side, issues include performance problems under peak load, degraded performance during traffic spikes, and system failures that cascade across services. These aren't always code-quality problems; they're often symptoms of architectural debt.

If your system is brittle, if it breaks under load, if it's hard to deploy safely, you have debt. Maybe it's infrastructure. Maybe it's architecture. But the signals are there. The effort required to fix things keeps growing, and bug fixes keep taking longer. The challenge is to connect these signals to specific architectural decisions.

Measuring Technical Debt

You can't manage what you don't measure. But most teams stop short of measuring metrics that signal technical debt since measuring it is harder than measuring code quality. Here are a few ways you can get some idea of where your application or organization sits in terms of technical debt load and impact:

Lines of Code Needing Refactoring: SLOC tools count how many lines are complex enough to warrant attention. Useful as a relative measure. Compare your current score to last quarter. Is it growing? Shrinking? Stable?

Cyclomatic Complexity: How many branches does a function have? Higher numbers mean harder to understand and test. A function with complexity over 15 is usually a sign of debt.

Test Coverage: Low coverage is a signal, though not definitive. Perfect coverage doesn't mean no debt. But if you're below 70% coverage, you probably have architectural debt you haven't refactored yet because you're afraid of breaking things.

Deployment Frequency and Lead Time: The DORA metrics tell you something about your debt. If deployment frequency is dropping and lead time is growing, debt is slowing down new development. Shopify's 25% rule quantifies this: allocate 25% of engineering capacity to debt reduction and maintaining infrastructure. If you're below that, debt will grow.

Mean Time to Recovery (MTTR): How fast can you fix production incidents? If it's growing, debt is making your system more fragile.

Architectural Complexity Metrics: Can you answer these questions?

- How many services do you have? How many are actually used?

- What are your most critical dependencies? Do you know?

- What's your blast radius if Service X goes down?

- Can a single team understand its service's entire dependency graph?

If you can't answer these, you most likely have architectural debt and probably have a visibility problem beneath it.

How to Manage and Reduce Technical Debt

Establish a Clear Definition

Your team needs to agree on what debt looks like. Is a function with 500 lines of code debt? Is using an unsupported library debt? Is a hardcoded configuration debt?

Without a shared definition, some people will want to refactor constantly while others want to ship fast. You'll have endless unproductive arguments.

The key is for teams to define it together, write it down, and to make it part of their definition of done. "Code debt accepted here" should be explicit, not implicit.

Prioritize Debt in Sprint Planning

Most software development teams don't allocate any time to paying down debt in their sprints. Everything is new feature development. Every sprint is packed. Debt never gets priority because it never shipped a feature.

Like the Shopify tactic we discussed earlier, you need to fix this mindset and allocate time. Some teams run dedicated debt sprints, while others use the 25% rule: reserve a quarter of sprint capacity for addressing technical debt. Regardless of the direction, reserve something.

When you prioritize debt alongside features, you send a signal that it matters. You also prevent technical debt from compounding out of control. Small regular investments in paying down debt are much cheaper than emergency refactors or arguing for an increased timeline and budget for every new project.

Automate Testing and Quality Gates

You can't refactor safely without tests. Automated testing and continuous integration are your safety net. But you also can't ship code with low coverage if you have automated gates. So the true fix here is to set up quality gates. This means making coverage requirements mandatory and (when possible) making complexity limits enforced by your CI/CD pipeline. You should also consider making architectural rules automatable. If the code breaks the rules, the pipeline stops it. Quick fixes that skip these gates are how teams end up doing a poor job of managing debt.

This shifts debt from being something you discover after it's shipped to something you catch before it lands. Preventive medicine, rather than emergency surgery, reduces manual effort throughout the entire development process.

Modernize Architecture Incrementally

Although it is tempting, don't try to rewrite everything at once. This is likely to actually create more debt, not less.

This is where tactics like the strangler fig pattern can help. Build the new architecture alongside the old, and gradually move traffic over. This allows teams to adopt modern technologies incrementally, keep both old tech running until it is completely unused, then decommission it. Catio's modernization solution supports exactly this: providing execution blueprints that help teams plan incremental transitions with real cost and timeline data.

Data-driven recommendations help here. Instead of debating whether to refactor or rewrite a service, you evaluate it with real cost, risk, and timeline data. You can actually answer: "Should we modernize this service or build something new?" based on facts, not feelings. That's how you enable future development without creating more debt.

Architecture Governance as a Technical Debt Strategy

Here's what most teams get wrong: they treat debt as a code problem. They hire better developers, add more static analysis, and sometimes run refactoring sprints.

But if you're creating debt faster than you can pay it down, the real problem is decision-making. Software development suffers when you make architectural decisions without proper governance.

Architecture governance means:

- Visibility into decisions being made. You should know when teams are choosing a new technology, breaking up a service, or integrating with a third party. You should see the decision and its justification. Many organizations have no record of why certain architectural choices were made. That's a governance gap.

- Data-driven decision criteria. When choosing between frameworks, databases, or architectural patterns, do you have criteria? Cost, complexity, team expertise, scalability, security profile? Or are you just picking whatever the loudest engineer wants?

- Architecture review processes that actually work. Not rubber stamps. Real reviews where you challenge decisions, propose alternatives, and force teams to think through trade-offs. This prevents the low-quality decisions that create debt in the first place.

- Connection between architecture and business outcomes. Every architecture decision should map to a business outcome. Faster feature delivery. Better security. Lower cost. Cost efficiency. If you can't articulate why a decision matters to the business, you're probably creating debt.

An AI architecture copilot helps you apply this governance at scale. Instead of Architecture Review Boards meeting every three weeks, you have conversational decision support. Teams explore trade-offs in real time, see cost and risk data immediately, and make better decisions faster. The result is preventative: less debt created in the first place. That's worth more than paying down debt after the fact.

Technical Debt in the Age of AI

AI coding tools like Claude Code and Cursor have permanently changed how fast teams can produce software. Developers and their AI copilots can now generate, refactor, and ship code at a pace that would have been unthinkable three years ago. That speed is real. But it has a side effect nobody planned for: when code moves faster than architectural reasoning, every new line of code doesn't always add to the whole. It fragments it.

The new debt multiplier isn't bad developers. Instead, it's fast developers without architectural context.

An AI coding agent can produce a perfectly functional service in an afternoon. But if that service duplicates an existing capability, introduces a conflicting data pattern, or violates the team's modernization roadmap, it's debt. High-quality debt that looks clean in a code review but creates system-level incoherence.

This is a category of technical debt that compounds differently than traditional debt. It's not sloppy code necessarily. It's well-written code that doesn't fit the architecture. And it ships faster than any human review process can catch.

The pattern looks like this: five teams each use an AI coding tool to build their own authentication wrapper. Each implementation is solid. But now you have five authentication approaches instead of one. Patterns don't replicate. Standards drift. Local optimization breaks global coherence. The architecture fragments not because anyone made a bad decision, but because nobody gave the coding agent enough architectural context to make a good one.

The fix isn't to slow down AI code generation. The fix is to give it better direction. When coding agents receive execution-ready specs that include system boundaries, interface contracts, dependency constraints, and non-functional requirements, the code they produce stays aligned with architectural intent. That's the difference between code that compounds the system and code that splinters it.

Re-architecting for the AI era means pairing your coding IDE with an Architecture IDE that reasons at the system level. Catio produces the architectural direction, constraints, and guardrails. Your AI coding tools execute from those specs. The result is that teams keep the speed without inheriting the drift that AI coding agents tend to inject.

Conclusion

Technical debt isn't something that happens to you. It's something you create every time you choose a shortcut over the right solution. The goal isn't to eliminate it. It's to keep it manageable and manage technical debt before it manages you.

You do that through visibility, governance, and deliberate prioritization. You identify technical debt early. You create processes that catch bad decisions before they become embedded. You allocate capacity to paying it down consistently.

Most importantly, you stop treating debt as inevitable friction and start treating it as a problem with solutions.

If your team doesn't have clear visibility into your architecture, if decisions are made without data, if you don't know the actual cost and risk trade-offs you're making, you're creating debt blindly. That's the real risk.

Book a demo to see how architecture visibility and AI-powered decision-making can help you prevent debt instead of just cleaning it up after the fact.

FAQs

What is technical debt in Scrum?

In Scrum, technical debt refers to shortcuts taken during sprints that create future rework. It often accumulates when teams prioritize sprint velocity over long-term code and architecture quality. The common fix is allocating a percentage of sprint capacity (many teams use Shopify's 25% rule) to paying down existing debt alongside feature work. Without this discipline, the causes of technical debt multiply sprint over sprint until velocity plateaus.

Is technical debt always bad?

No. Deliberate, prudent technical debt is a legitimate engineering strategy. If you're racing to validate a product hypothesis and you knowingly cut a corner, that's a reasonable trade-off. The debt becomes a problem when it's untracked, unmeasured, or never paid down. Think of it like financial debt: a mortgage is reasonable, but maxing out credit cards with no repayment plan isn't.

What are the 4 quadrants of technical debt?

Martin Fowler's Technical Debt Quadrant maps debt along two axes: deliberate vs. inadvertent, and reckless vs. prudent. The four resulting quadrants are: Deliberate Reckless ("We don't have time for design"), Deliberate Prudent ("We'll ship now and refactor next quarter"), Inadvertent Reckless ("What's a design pattern?"), and Inadvertent Prudent ("Now we know how we should have built it"). Most mature teams accumulate inadvertent prudent debt. The dangerous kind is deliberate reckless, where teams knowingly skip quality with no plan to address it.

Who pays for technical debt?

Everyone. Engineers pay through slower development cycles and frustrating debugging sessions. Product teams pay through delayed feature delivery. The business pays through higher operational costs, increased incident rates, and lost competitive advantage. According to research from McKinsey, organizations spend roughly 10 to 20 percent of their technology budgets servicing technical debt. The cost is real, measurable, and usually much higher than teams working on it estimate.