System Architecture: A Complete Guide to Types and Design

Every engineering team that has ever said "we need to rewrite this" has actually said, "we lost control of our system architecture." The code runs. The system still serves customers. But nobody can draw how different components interact on a whiteboard without three people correcting each other. System architecture is the discipline that prevents that slide into fog.

This guide covers what system architecture is, the system components that make up any real system, the types you'll see in production, how to approach system design, and the common failure modes. It's written for engineering managers, CTOs, and architects who live with the decisions they make.

What Is System Architecture?

System architecture is the structural design of a system's components, their relationships, and the principles that guide how the system evolves over time. It describes how hardware, software, data, and system interfaces combine into a coherent whole that meets both functional and non-functional requirements. A well-defined system architecture gives development teams a shared mental model and a common language for decision-making across software development and operations.

ISO/IEC/IEEE 42010:2022 is the formal standard for architecture descriptions; it distinguishes the architecture itself from the description of that architecture. The Systems Engineering Body of Knowledge (SEBoK) captures the consensus definition: architecture comprises the "fundamental concepts or properties of a system in its environment embodied in its elements, relationships, and in the principles of its design and evolution." Less formally, architecture is the overall system structure plus the rules for how that structure changes.

System Architecture vs. Software Architecture

The two overlap but aren't identical. Software architecture covers the internal structure of a software application: modules, layers, APIs, and runtime behavior. System architecture is broader. It includes the software, hardware, network, data storage, external systems, and humans in the loop. For example, choosing microservices is a software architecture and software design decision; running components on Kubernetes across three cloud regions is a system architecture decision.

System Architecture vs. System Design

System architecture sets the high-level shape. System design fills in the details. Architecture answers "what are the components and how do they relate?" System design answers "how does each one actually work?" Teams use system architecture diagrams to lock in the big picture, and system design then produces the detailed technical specifications for each part.

Key Components of System Architecture

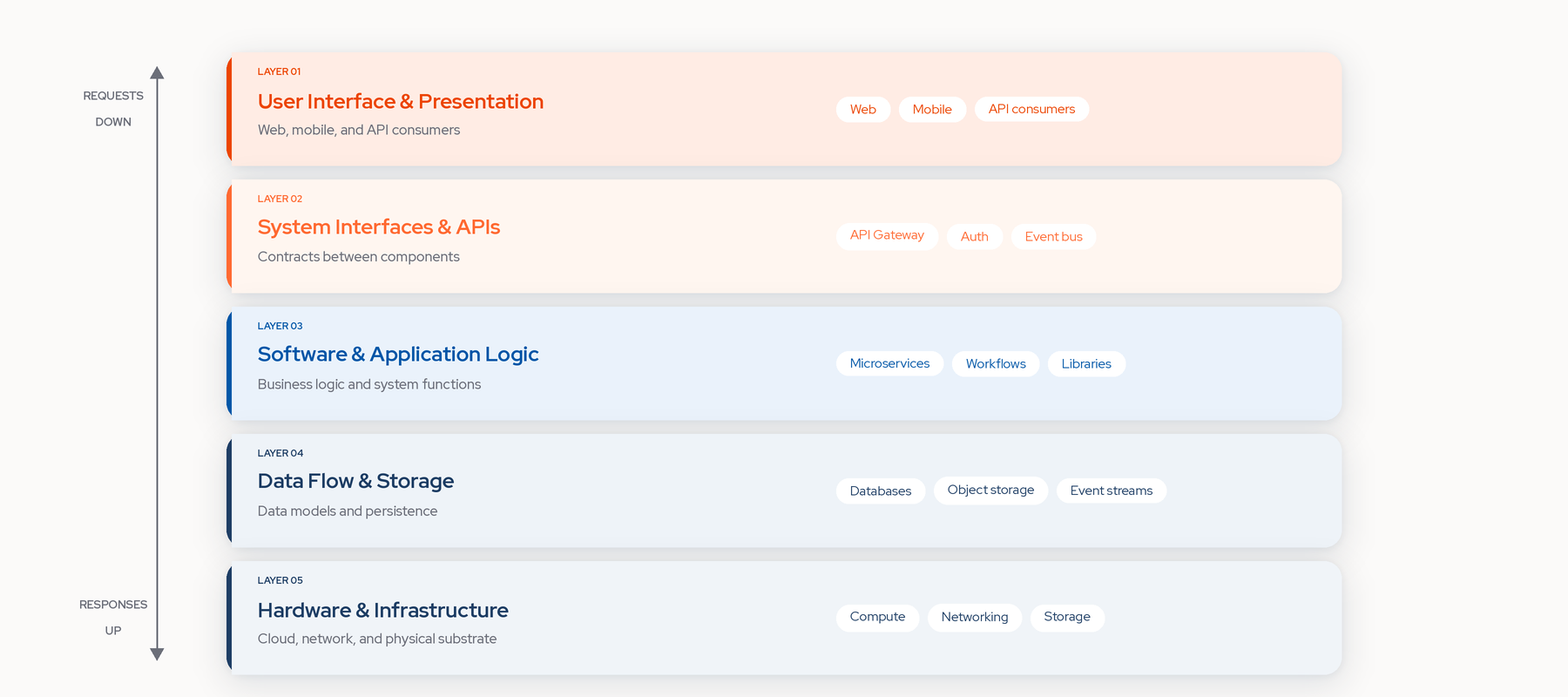

Every system architecture has the same basic building blocks: hardware, software, system interfaces, and data. The key components are consistent across system types; how you combine them is where software design decisions live.

Hardware and Infrastructure

The physical and virtual substrate on which the system runs. Servers, storage devices, networking, and, for most modern software systems, cloud services that abstract all of it. Cloud-native infrastructure uses managed services for elasticity, with infrastructure defined as code and autoscaled on demand. The cloud provider and underlying tech stacks become part of the overall system structure, not just hosting details.

Software and Application Layers

The code that implements business functions. Most software systems use layered architecture: a presentation layer with the user interface, a middle layer implementing business logic and system functions, and a data layer handling persistence. Separation of concerns between these layers keeps software systems maintainable as they grow.

System Interfaces and APIs

The contracts between system components. Interface management defines the boundaries where components interact, and it's where most integration bugs live. A centralized API Gateway manages traffic, user authentication, and routing across independent services. Good interface design is the difference between a payment service that can be swapped in a sprint and one that takes a quarter.

Data Flow and Storage

How information moves through the system and where it lives. Data flows include event streams, synchronous API calls, and scheduled batch jobs. Storage choices shape the data models the system can use. Data mesh distributes data management across domains to avoid a central bottleneck.

Types of System Architecture

The style you pick shapes almost every future decision. There's no universally best choice, only the right fit for your team size, workload, and operational maturity. These are the patterns you'll see most often.

Monolithic Architecture

Monolithic architecture is a software architecture style where the entire application is built, deployed, and scaled as a single indivisible unit, with all components interconnected in one codebase. Everything lives together: user interface, application logic, data models, and persistence. A simple monolithic design is fast to build, which is why most successful companies started as monoliths. The trade-off comes when the monolith outgrows the team's ability to hold it in their heads.

Client-Server Architecture

Client-server architecture splits the system into two roles: a central server provides resources, and a client consumes them. The web runs on this pattern. So do most mobile apps, most business software, and most file-sharing networks that aren't peer-to-peer. It's the default mental model for software development because it's simple and scales reasonably well.

Microservices Architecture

Microservices architecture breaks complex applications into smaller, independent services that run in their own processes and communicate through lightweight mechanisms such as HTTP/REST or messaging. Each service owns its own data models and business logic. The promise is independent deployment, independent scaling, and team autonomy. The cost is distributed systems complexity: network failures, eventual consistency, and observability overhead. That complexity is where most microservices migrations quietly fail.

Event-Driven Architecture

Event-driven architecture (EDA) is a design pattern where producing, detecting, and reacting to events are the primary communication mechanisms between system components, enabling asynchronous processing. Instead of service A calling service B, service A emits an event, and interested services react. EDA decouples producers from consumers, which scales well but makes causal reasoning about system behavior harder.

Serverless Architecture

Serverless architecture runs code as short-lived functions on infrastructure managed entirely by the cloud vendor. Teams skip managing servers; they write functions, and the software platform handles scaling, availability, and billing per invocation. Serverless excels at spiky workloads and struggles with long-running state or consistent low-latency performance.

Layered Architecture

Layered architecture divides an application into layers with specific roles (presentation, application logic, data storage), promoting separation of concerns and simpler maintenance. It's the pattern inside most monoliths and many microservices. Its weakness is its strength inverted: cross-layer changes ripple everywhere.

Hexagonal Architecture (Ports and Adapters)

Hexagonal architecture, also called ports and adapters, isolates business logic at the center and exposes it through explicit ports that adapters implement (databases, UI frameworks, external APIs). Swapping a database or framework means swapping an adapter rather than rewriting core logic. It's especially useful when modernization will later touch the infrastructure beneath complex systems.

This comparison captures the key trade-offs:

How to Design a System Architecture

Good system design emerges from constraints; it is not perfected on day one. It follows from honest constraints, the discipline to pick the boring option when it's right, and a willingness to revisit as reality changes. This is the practical guide to system design that most software engineering courses skip.

Define Requirements and Constraints

Start with what the system has to do and what it can't. Functional requirements describe system functions and behaviors. Non-functional requirements cover performance, availability, security, compliance, and cost. Constraints matter as much as requirements. A system that must run in a specific region, on a specific cloud provider, or integrate with a specific legacy tech stack has fewer design alternatives. Write both down before anyone draws a box.

Choose the Right Architecture Pattern

Pattern selection follows from constraints, not preference. A team of 8 engineers building a SaaS product for 100 customers should start with a modular monolith. A team of 400 engineers integrating 15 acquired companies needs something different. The cloud framework docs codify this thinking. The AWS Well-Architected Framework organizes pattern selection around six pillars (Operational Excellence, Security, Reliability, Performance Efficiency, Cost Optimization, Sustainability), and the Google Cloud Well-Architected Framework covers similar ground. Both are worth reading before picking a pattern.

Gartner forecasts that over 40% of leading enterprises will adopt hybrid computing paradigm architectures by 2028, up from 8% today; the single-cloud assumption is increasingly unsafe.

Map Dependencies and Data Flows

Once a pattern is chosen, map what talks to what: every service, database, third-party integration, and data flow between them. Good dependency maps surface the hidden coupling that turns simple changes into quarter-long projects. Programs stall here because one-off PowerPoint maps go stale the week they're finished. Architecture visibility tools that keep dependency maps up to date with the live system solve the stale-documentation problem.

Plan for Scalability and Resilience

A good system architecture anticipates failure. Design for failure means circuit breakers, retries with backoff, and graceful degradation. Build horizontal scaling points from the start. Systems modeling and validation against a digital model help verify that key components interact as intended before committing. Observability and operability should be core architectural requirements, with centralized logging, monitoring, and distributed tracing essential for understanding system behavior in production.

System Architecture Diagrams: Best Practices

An architecture diagram is a blueprint of a software system that shows its core components, their interconnections, and the communication channels that drive functionality. System architecture diagrams help stakeholders understand how everything fits together, and they're the primary artifact that survives handoffs between teams.

What to Include in an Architecture Diagram

A useful system architecture diagram shows components, interfaces, and information flow at one level of abstraction. Required elements: every component that matters, connections with direction and protocol labeled, external systems at the boundary, and a clear title. High-leverage additions: annotations for failure modes, scaling behavior, or decision rationale.

Common Diagramming Tools and Standards

The C4 model (Context, Container, Component, Code) is the most widely used standard for system architecture diagrams because it forces clarity about the zoom level. Tools implementing C4 (Structurizr, Mermaid, Diagrams-as-Code) produce diagrams you can version-control alongside code. Generic tools (Lucidchart, Miro, draw.io) are faster to draft in but harder to keep synced. Model-based systems engineering (MBSE) uses SysML for heavyweight cases; aerospace teams, for example, use SysML-driven systems modeling to coordinate across engineering disciplines.

System Architecture in Practice: Real-World Examples

Abstract patterns only go so far. Real systems reveal how the types combine, and a couple of examples make the theory stick.

E-commerce platform. A modern e-commerce architecture uses microservices for core domains (catalog, cart, checkout, order management), event-driven architecture to propagate changes, serverless functions for glue work (for example, image resizing), and a central API Gateway for web and mobile clients. The data layer mixes a relational database with a search engine for catalog queries. It's a canonical example of combining several styles within a single system.

Enterprise system with legacy integration. Most enterprise architecture work is not greenfield. A realistic architecture combines a cloud-native front end, an integration layer (API gateway plus event bus) that translates between new and old, and legacy backends that still own critical business logic. Enterprise architecture is less about picking a style and more about managing the boundary between today's choice and the one you inherited.

Common Challenges in System Architecture

The same problems surface on almost every system that grows past its original design.

Managing Technical Debt

Every decision accrues debt. Short-term choices (hardcoded configs, bypassed abstractions, tightly coupled services) pay back later at interest. The dangerous pattern is debt that compounds silently because no one has a current view of the system. For example, a "small" refactor that becomes a quarter-long project usually means the team lacked a current dependency map. Modernization programs that start by quantifying debt outperform those that start by picking a runtime target.

Scaling Without Overengineering

The most common failure mode is building for scale you don't have. Teams reach for microservices because Netflix uses microservices. Modular monoliths combine simplicity with strict internal modular boundaries, and they're the right answer more often than the internet suggests. Many SaaS companies stay on one through Series B. The question isn't "what architecture will I need at 100x scale?" It's "what's the minimum architecture that gets me through the next 12 months without rewriting?"

Maintaining Visibility Across Complex Systems

The architecture on the whiteboard stops being the architecture on day two. Services get added, dependencies shift, and diagrams go stale within weeks. In large organizations, no single person knows the whole system. This is where a live architecture model matters. Static diagrams explain intent; a digital twin shows the system as it exists now. Catio builds that live model from architecture and operational data, helping teams see dependencies, integrations, and change impact before they commit to a design or modernization effort. Its AI copilot Archie provides 24/7 answers grounded in that live model. Ask "what depends on this service if I refactor it?" and get an answer from the current system, not a year-old diagram.

Conclusion

System architecture is not a one-time design exercise. It's a living discipline, shaped by constraints, refined by experience, and revalidated whenever the business or workload changes. Teams that treat it that way ship faster and break less. Teams that treat architecture as a document finished in month two discover six months later that the document and the system share nothing in common.

Architecture is learnable. The patterns are finite, the trade-offs are well-documented, and the major cloud vendors publish their frameworks for free. The harder part is maintaining visibility into the system you already have. For more, see Catio's architecture management resources or book a demo to see how a live architecture digital twin keeps the diagram and the system aligned.

FAQ

What is meant by systems architecture? Systems architecture is the structural design of a system's components (hardware, software, data, interfaces) and the relationships that govern how they interact and evolve. It defines how components fit together, what interfaces they expose, and how the system behaves under load and failure. ISO/IEC/IEEE 42010 defines it as the fundamental concepts or properties of a system embodied in its elements and principles.

What are the three types of system architecture? The three most commonly cited types are monolithic, client-server, and microservices. Monolithic systems package the entire application as one deployable unit. Client-server systems split responsibilities between a shared server and its clients. Microservices architecture breaks the application into independent services that communicate over the network. In practice, most real systems combine more than three styles, often with event-driven architecture or serverless components.

What is an example of a system architecture? A modern e-commerce platform is a good example. Catalog, cart, checkout, and order services run as independent microservices. Changes propagate through event-driven architecture. Serverless functions handle glue work. An API gateway fronts the system for web and mobile. The architecture combines multiple styles because no single style fits every requirement.

What is the average salary for a system architect in the US? The closest BLS occupation is Computer Network Architects. The US Bureau of Labor Statistics reports a 2024 median annual wage of $130,390, with the field projected to grow 12% from 2024 to 2034, much faster than average. Senior, staff, and principal roles sit above the median, with cloud and fintech positions at the top. Check current market data for up-to-date figures.